Bioshock Infinite

BioShock Infinite is a first-person shooter video game developed by Irrational Games and published by 2K Games. It was released worldwide for the Microsoft Windows, PlayStation 3, and Xbox 360 platforms on March 26, 2013. Set in 1912, the game has the protagonist, former Pinkerton agent Booker DeWitt, sent to the floating air city of Columbia to find a young woman, Elizabeth, who has been held captive there for most of her life. Though Booker rescues Elizabeth, the two become involved with the city’s warring factions: the nativist and elite Founders that rule Columbia and strive to keep its privileges for White Americans, and the Vox Populi, underground rebels representing the underclass of the city. Wikipedia

For Bioshock Infinite we used the benchmarking tool Adrenaline Action which allows us to select all of the quality settings and resolution and run a standardized benchmark that’s ensured to be consistent across all runs.

- Ultra Quality

- FXAA – On

- Texture Detail – Ultra

- Texture Filtering – 16x Aniso

- Dynamic Shadows – Ultra

- Postprocessing – Normal

- Light Shafts – On

- Ambient Occlusion – Ultra

- Level of Detail – Ultra

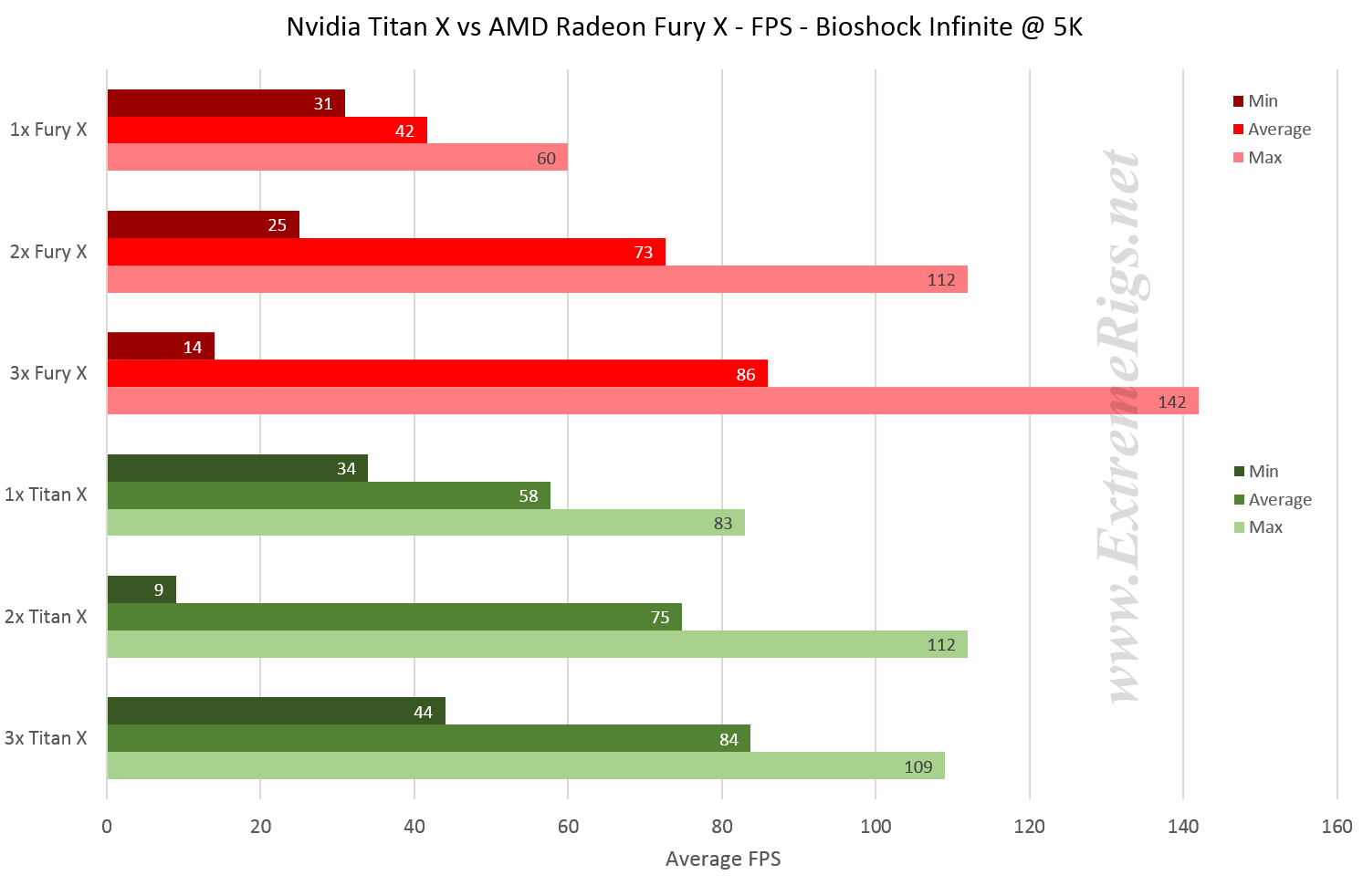

Let’s take a look at the FPS performance across all 6 setups (min, average, max):

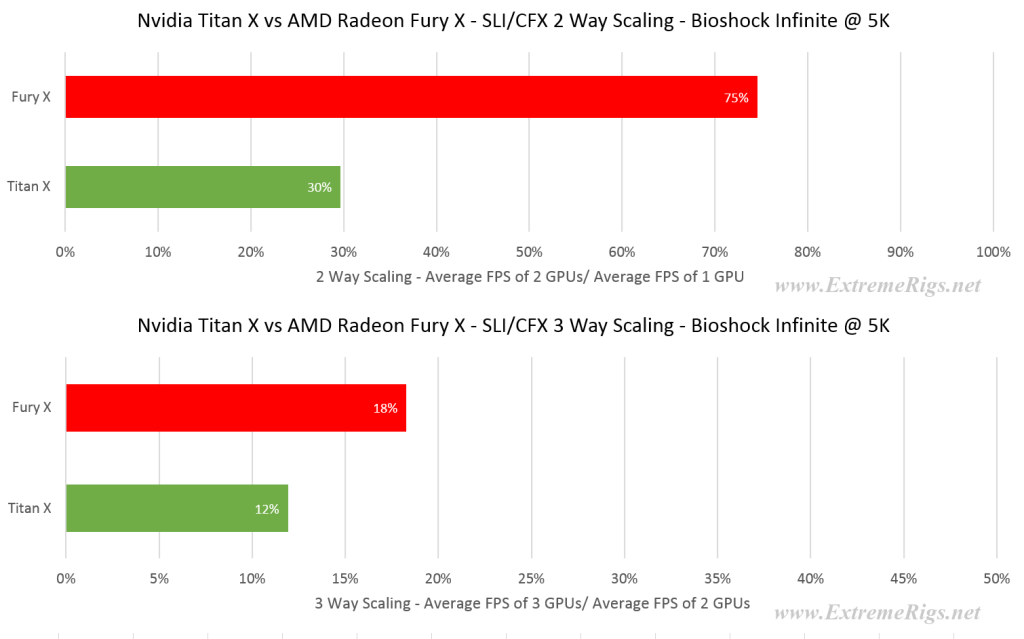

Unlike BF4, Nvidia and AMD trade blows on this title. The single Titan X has great performance vs the single Fury X, however it doesn’t scale up so well allowing AMD to just equal it with 2 way setups and overtake it at 3 way:

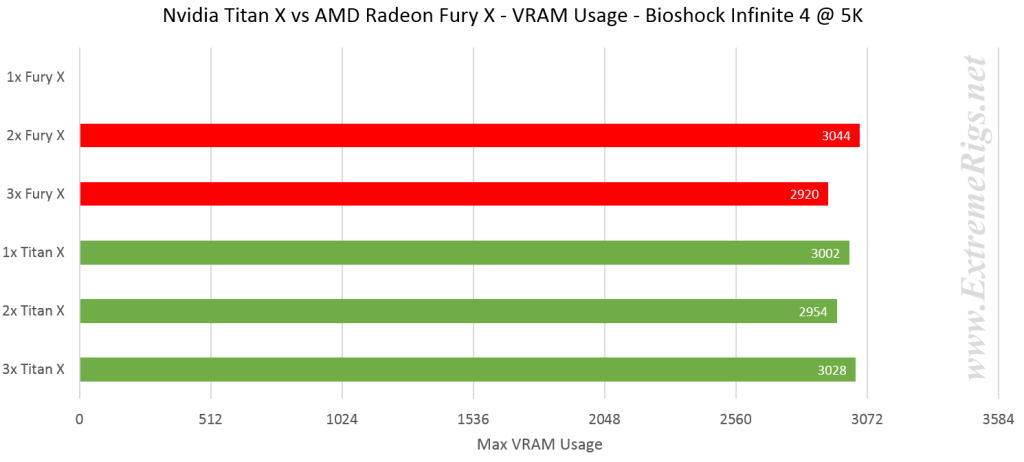

3 way scaling is so low as frame rates are already in the 80’s with 2 way setups. This may indicate some other factors are starting to throttle performance beyond the GPUs. Let’s check VRAM out to make sure we’re under that pesky 4GB limit:

The single Fury X run wasn’t datalogged on VRAM, however it should be similar to the rest. At 3GB of usage there is plenty of margin.

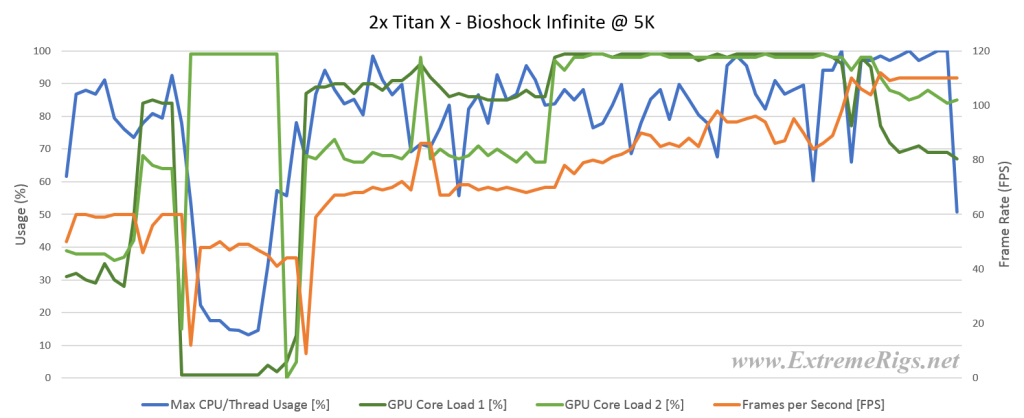

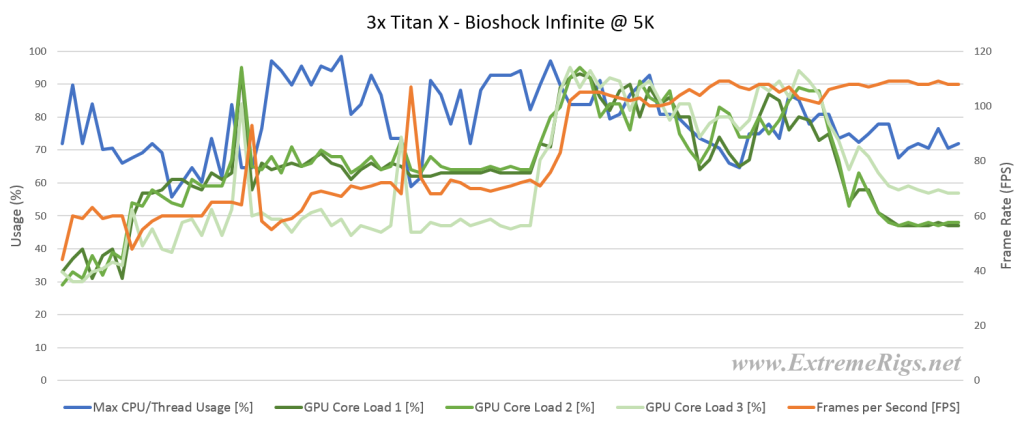

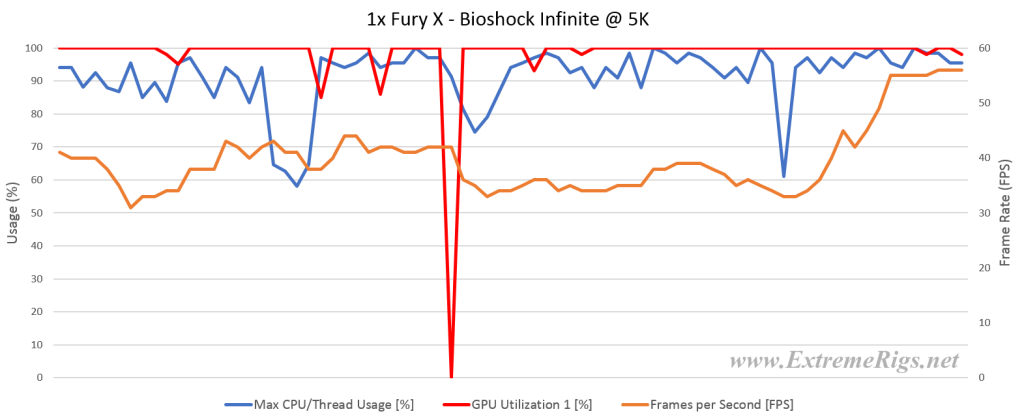

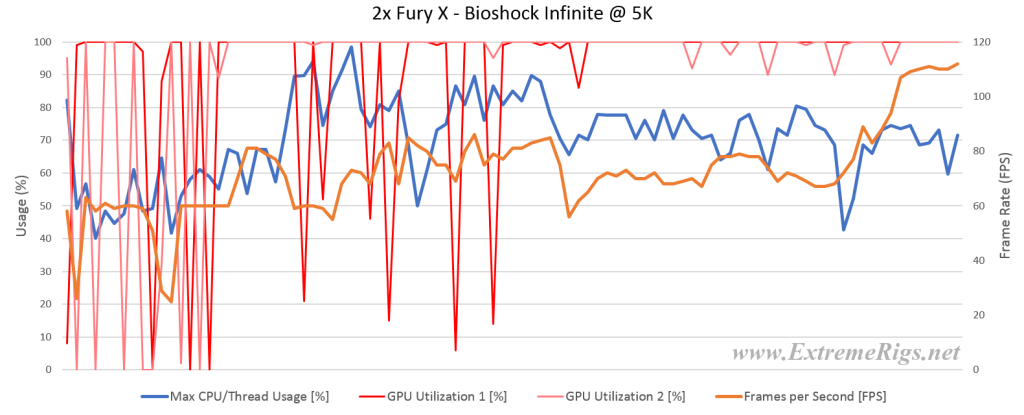

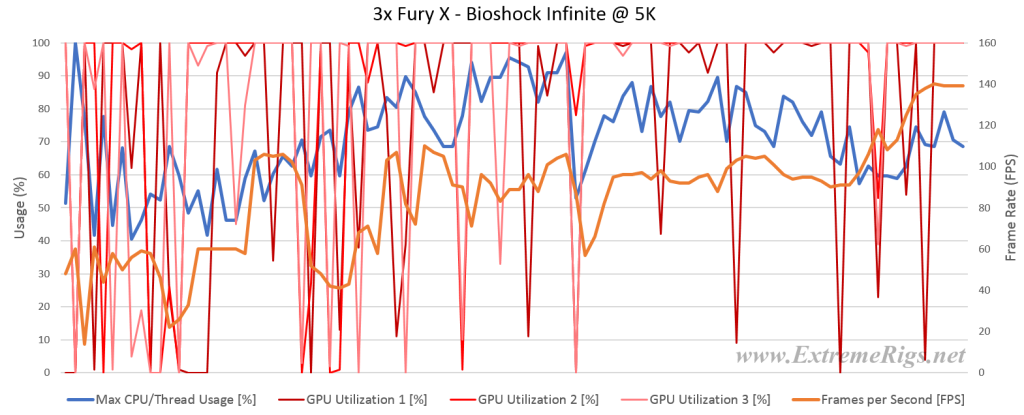

If we take a look at the resource usage & FPS plots we see a familiar pattern. As the number of GPUs increase the amount of 0% usage spikes increase. However this doesn’t usually affect performance in Bioshock as they do not often correlate to dips in FPS:

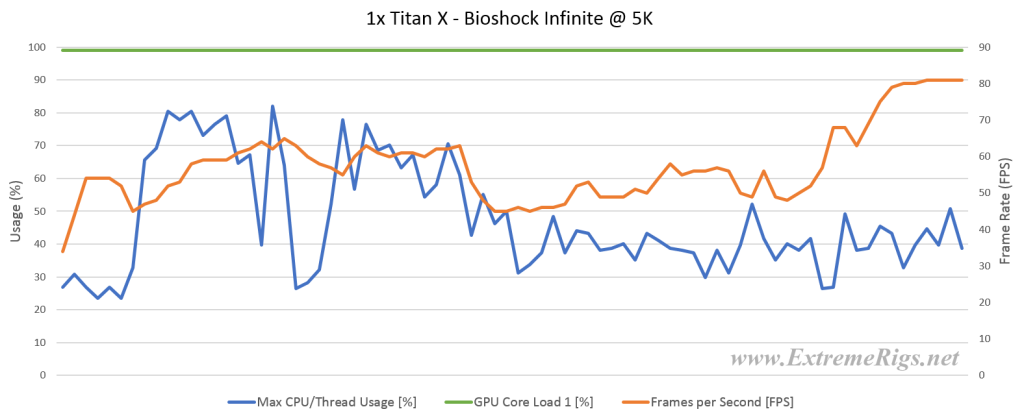

For Nvidia we see uncharacteristically strange logs:

For the single Titan X of course things look clean, but the 2 way SLI shows some very strange behaviour:

The 3 way SLI is clearly not even using much of the potential processing power. Both experiences were judged to be smooth.

So for Bioshock Infinite – the edge goes to the Titan X as a single GPU is enough to power it, while maintaining a sizeable lead over AMD in that particular case.

To be honest, I started reading this article with a thought that “well, how good can it be? ERs are watercooling guys so, nah! can’t expect too much”. But after reading the whole of it, I have to say, This is by far the one of the best comparative reviews I’ve ever seen, and really met all my expectations. Hats off to you guys!

Say, FuryX did have the claim in its pre-release rumor to be a TITAN X competitor, but then AMD shrunk that note to 980 Ti. So I think comparison with 980 Ti would’ve been better comparison (and seat clenching brawl) than this, and the clock-to-clock performance metrics but nonetheless, this is wayy too good also!

About capping the vRAM on FuryX in few games there, it also suffers from similar performance degradation on 4K and downwards. And as you may have seen on other reviews, FuryX does worse in sub-4K than the competition and even worse in 1080p. I’ve dug through every review out there yet haven’t found the reason behind this. What could be the reason?

And that scaling on nVidia – you know nvidia does claim that their SLI bridge isn’t a bottleneck, and so it is proved here :P. When AMD did introduce XDMA, I had this belief – AMD took the right path, bridges will suffer from less bandwith sooner or later, and PCIe already has the room for accommodating the data of the bridges. So XDMA did (well, now it is “do” :D) makes sense!

But it’s sad to see AMD not delivering smoother experience overall. If they could handle that thing, this should certainly deserved the choice over any of those higher average FPS of Titan X. But I think AMD is working on Win10 & DX12 optimized drivers and trying to mend it with bandages for now.

My only complain was the choice of TitanX over 980 Ti and clock-to-clock performance, but other than this, this is a very great review! Hats off to you again!

Agreed with Frozen Fractal. I was more than pleasantly surprised with the quality of this review, and I hope you continue to do these more in the future. A 980 Ti would have been nice to see too given the price.

Keep up the great work!

Thanks! I agree the 980 TI would have been a better comparison – then prices would have lined up. “Sadly” we only had Titan X’s and we weren’t willing to go out and buy another 3 GPUs to make that happen. However if anyone wants to send us some we’d gladly run em haha. I was simultaneously impressed with AMD’s results while saddened that after all the work on frame pacing that things still aren’t 100% yet. Hopefully

Question…you overclocked the Titan X to 1495MHz which is “ok” for a water cooling build. I won’t complain…though I’m surprised at why that’s all you were able to achieve as I can pull that off on Air right now (blocks won’t arrive until next week). Main question though…why wasn’t the memory overclocked? A watercooled Titan X has room to OC the memory by 20%, bumping up the bandwidth to 400Gbps, which brings it quite a bit closer to the 512Gbps of the HBM1 in the Fury X.

Although we didn’t mention it the memory was running a mild OC at 7.4Gbps up from the stock 7gbps

6gbps – so yes about the same as your 20%

This is what concerns me about your understanding of overclocking. The Titan X memory is 7GHz at stock. At 7.4GHz you’re only running a 5.7% OC on the memory…

Hah you’re right, I was looking at titan stock memory settings not titan x. Yes it could have been pushed harder. Still though single Titan X was really good compared to Fury X – the issue it had was scaling. So unless scaling is significantly effected by memory bandwidth then I don’t think it changes the overall results much. When we re-run with overclocked furies we’ll spend a bit more time on the Titan X overclock too.

Don’t get me wrong…SLI scaling is definitely an issue and will still exist regardless. But you’d be surprised how important memory clocks can be depending on the test you’re running. I found this out when I was running the original GTX Titan Tri-Sli in the overclock.net unigine valley bench competition and came in second place. Leave the official redo for your next review of Fury X OC vs Titan X OC, as you mentioned. But to satisfy your own curiousity, try a higher memory clock and see what happens. If you were looking to squeeze out every last ounce out of your Titan X, you should check out http://overclocking.guide/nvidia-gtx-titan-x-volt-mod-pencil-vmod/ as well.

My Phobya nanogrease should be coming in tomorrow so I’ll finally be putting my blocks on as well. I’m going to compare my performance results with yours next time you run your benches. So make sure you do a good job.

Excellent review. I am also running an Asus Rampage Extreme V with a 5960x OC’d to 4.4Ghz so your data really was telling. I’m running a single EVGA GTX980TI SC under water. I previously ran 2 Sapphire Tri-X OC R9 290s under water but opted to go with the single card.

Did you use a modded TitanX bios? What OCing tool did you use to OC the TitanX. I would like to try to replicate the parameters and run the single 980TI to see how close I am to your single TitanX data. Thank you.

Eh, i don’t really see the point of running AA in 5K Too bad it’s 5K btw, 4K is more reasonable. Too bad Fury X has problems with the Nvidia titles(W3 for example).

Too bad it’s 5K btw, 4K is more reasonable. Too bad Fury X has problems with the Nvidia titles(W3 for example).

But man, the scaling and texture compression on amd cards are absolutely amazing. If only they weren’t bottlenecked by the HBM1’s 4GB of VRAM.

[…] Review: Titan X vs Fury X in a Triple CFX SLI Showdown […]

[…] Review: Titan X vs Fury X in a Triple CFX SLI Showdown […]

[…] Review: Titan X vs Fury X in a Triple CFX SLI Showdown […]

Comments are closed.